Over the past weeks, our development team spent some time working on automating our development process, including setting up CI/CD pipelines. Although we worked on automation at customers, we did not have anything in place for Just-BI’s developments yet. Another aim of this project was to compare AWS CodePipeline with GitHub Actions.

CI/CD

With any source control tool, code is being maintained at one place and developers are constantly aware of wat their team members are doing, Continuous integration and continuous delivery (abbreviated: CI/CD), is the best practice for a development team to deliver code more frequently and in a more reliable matter. As the complete build, test, and delivery tasks are automated, you can push your work daily and bugs can be found immediately after code changes are applied.

If the build passes all the tests, the software can be automatically deployed to the desired environment. This is an automated step referred to as: continuous delivery. The CD pipeline executes a lot of steps of different complexities, depending on the scope of the pipeline. You can think of steps that create or configure the infrastructure, move code to the correct environment, manage the environment variables, restart services (endpoints), execute tests, or provide log data on the state of the delivery.

Containers

In CI/CD pipelines for cloud environments, developers often use containers like Docker. These containers make it easy to package and ship applications, in a standardized way. A container packages code and all its dependencies, so the application will run in any environment. This helps to solve the “it works on my machine” problem.

In summary, CI/CD pipeline will help to compile, build, test, and deploy code to the production platforms. It will take code from your versioning control system and make your application available to the users in an automated process. This helps to avoid manual work and therefore human errors, theoretically resulting in faster time to market.

Now that we know what CI/CD is and what it does, let’s dive into two CI/CD pipeline options: AWS CodePipeline and GitHub Actions.

AWS CodePipeline and CodeBuild

The amazon cloud platform offers a number of solutions for CI/CD. We investigated the main ones.

AWS CodePipeline is a continuous delivery service, which consists out of different stages. Stages are a unit that you can use to isolate an environment, and to limit changes in that environment. In each stage, you can execute one or more actions on artifacts. These artifacts are collections of data for your application, for example, source code, dependencies, definitions files, and so on.

AWS CodePipeline is almost always used with AWS CodeBuild, which is a continuous integration service that compiles source code, executes tests, and builds ready-to-deploy software packages.

The different stages of the CodePipeline we set up are:

-Source stage.

AWS CodePipeline supports source code that is hosted on AWS S3, AWS CodeCommit, or GitHub. As we use a shared GitHub organization to store the source code of our applications, the source for our CodePipeline is a GitHub repository, specific to this application. We connected the GitHub repository via a webhook. Each time a new release is pushed to master, the pipeline is triggered.

-Build stage with AWS CodeBuild.

CodeBuild compiles your code, runs tests, and produces ready to be deployed artifacts. It manages the build servers. CodeBuild works with a BuildSpec file (build specification), that needs to be stored in the root of your directory. The file is being used to run a build and specifies the build commands and the related settings. We disabled webhooks in CodeBuild, as we already set up a webhook on the pipeline in the source stage. We use the CodeBuild project as a build step as part of the pipeline. If we would not disable the webhook in this project, two builds would have been triggered, one on the CodeBuild project, and one through the CodePipeline. AWS bills on per-build basis, so it’s a cost-saving setting!

-Deploy stage with AWS CodeDeploy.

CodeDeploy automates software deployments to compute services. For this pipeline, we are deploying to an EC2 instance. CodeDeploy needs an AppSpec file in the root of your application’s directory. This file helps to manage each deployment as a series of lifecycle event hooks. In the case of EC2, it allows you to map the source files of your revision to the destination on the instance, manage permissions for the deployed files, and define the scripts that need to be run on the instances. The main disadvantage of AWS CodeDeploy is that it only deploys to currently running AWS services. That’s why we decided to also investigate AWS Elastic Beanstalk, more on that later in this blog.

GitHub Actions

GitHub Actions is a service integrated in GitHub. It has an open marketplace with over 6000 actions to improve your workflow. These actions are created by users, as well as trusted organizations, like AWS, Docker or GitHub. The workflows can be triggered by several GitHub events, like a push or a pull request. We decided to make a CI/CD tool for our Juicy server with a GitHub actions pipeline. We needed a lot of build steps for this, that is why I summarized them into four main steps:

-Install dependencies.

First, we ensure that we are using the correct source code, history, git versions, and we install all the dependencies we need.

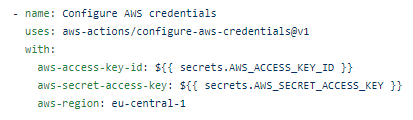

-Configure AWS credentials.

As we want to deploy our server with Amazon services, we make sure that we configure the correct AWS credentials: aws access key, aws secret access key, and aws region.

-Amazon Elastic Container Registry (ECR).

Next, we want to store and deploy a Docker container image to ECR. ECR is AWS’s Docker container registry that helps to store, manage, and deploy Docker container images. It hosts your images and allows you to deploy containers in a reliable way. We have a couple of steps dedicated to ECR. After logging in to ECR, we install the AWS CLI with a GitHub Action. Using this action, we can send ECR commands in later steps as if we were using the AWS CLI. After this, we retag our previous ECR image, and we build a new docker image, tag it and push it to the registry.

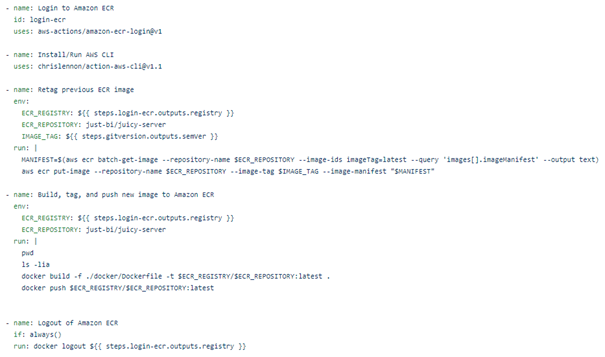

-Amazon Elastic Beanstalk (EB).

We decided to use EB for our server, as it automatically handles the deployment on AWS, but is also able to launch additional AWS resources and deploy code changes. We used several GitHub Actions for this. For EB to work, you first need to create an EB application, which serves as a container for the environments that runs our server. Once the application is set up, you can also create an EB environment, which runs the application’s version. After creating the environment once, you can change this step to deploy, as you only want to deploy a new version of your application at this point. The last step that we do in elastic beanstalk is send the environment variables that the application needs. We store these as secrets in GitHub and are picked up from there.

Comparison

Deciding what is the best solution for your situation depends on a lot of things. There could be many different reasons and considerations for choosing between the AWS solutions as compared to GitHub Actions. But for us, for now, the most important factors were:

- Both solutions are priced on a per build-minute. Additionally, AWS charges a fixed cost per month per pipeline. The advantage of GitHub Actions here is that a maximum amount of minutes is defined, so the final costs are easier to predict.

- Source code repository. GitHub Actions only supports GitHub as a source code repository, while AWS CodePipeline also allows Bitbucket, AWS CodeCommit and Amazon S3.

- As discussed before, GitHub Actions works with an open marketplace. These vary in quality, as it is open to publish for everyone. In comparison, AWS has its own built, high quality integrations.

- AWS authentication. The big advantage of CodePipeline is that authentication is handled with IAM roles instead of access keys for IAM users. With GitHub Actions, you must store the IAM user’s access keys in GitHub secrets.

- GitHub Actions is very easy to use, while CodePipeline is more difficult to get started with. CodePipeline will become more valuable as soon as you integrate it with other Amazon services (e.g. CodeBuild, CodeDeploy, and more).

Conclusion

During this project we set up two pipelines, one with each provider. This gave us a lot of insight on the possibilities and limitations of each solution. If you want to have more information on any of the topics that I discussed, please refer to the documentation of Amazon, GitHub or Docker.

https://github.com/features/actions