For one of Just-BI‘s customers, we’re currently working to productize a custom-built proof-of-concept SAP/HANA application.

This particular application is quite typical with regard to how we use SAP/HANA features and techniques: it is a web-application for desktop and tablet devices, that we serve through SAP/HANA’s XS engine. For database communication, we mostly use OData (using XS OData Services), as well as the odd xsjs request (in this case, to offer MS Excel export using ODXL)

The OData services that our application uses are mostly backed by SAP/HANA Calculation views. These, in turn, are built on top of a mixed bag of objects:

- Some of these are custom base tables that belong to just our application;

- Some are base tables that collect output from an advanced analytics recommendations algorithm that runs in an external R server

- Some are information views (analytic views, attribute views and calculations views) that form a virtual datamart (of sorts) on top of base tables replicated from various SAP ERP source systems to our SAP/HANA database.

One of the prerequisites to productize the current solution is a re-design of the backend. Redesign is required because the new target system will be fed from even more ERP source systems than our proof-of-concept environment, and the new backend will need to align the data from all these different ERP implementations. In addition, the R algorithm will be optimized as well: in the proof-of-concept environment, the advanced analytics algorithm passes through a number of fields for convenience that will need to be acquired from elsewhere in the production environment.

To facilitate the redesign we need to have accurate insight into which base columns are ultimately used to implement our application’s data services. As it turns out, this is not so easily obtainable using standard tools. So, we developed something ourselves. We think this may be useful for others as well, which is why we’d like to share it with you through this blog.

Information View Dependencies

The standard toolset offers some support to obtain dependencies for information views (analytic views, attribute views and calculation views):

- If you’re a HANA Studio user, you might be able to use the “Where-used-list” and/or “Column lineage” features. Check out Krishnamoh Krishna’s wonderful blog about this topic.

- You can query the

OBJECT_DEPENDENCIESsystem view

As it turns out, these standard tools do not give us the detailed information that we need. The HANA studio features are mainly useful when designing and modifying information views, but do not let us obtain an overview of all dependencies, and not in a way that we can easily use outside of HANA Studio. The usefulness of querying the OBJECT_DEPENDENCIES system view is limited by the fact that it only reports objects – that is, base tables or information views – but not the columns contained therein.

It looks like we’re not the only ones struggling with this issue.

Getting the information view’s definition as XML from _SYS_REPO.ACTIVE_OBJECT

To get the kind of information we need, we’re just going to have to crack open the definition of the information view and look what’s inside. As it turns out, HANA stores this as XML in the CDATA column of the _SYS_REPO.ACTIVE_OBJECT system table, and we can query it by package name, object name and object suffix (which is basically the extension of the file containing the definition that is stored in the repository):

SELECT CDATA FROM _SYS_REPO.ACTIVE_OBJECT WHERE PACKAGE_ID = 'my.package.name' AND OBJECT_NAME = 'CA_MY_CALCULATION_VIEW' AND OBJECT_SUFFIX = 'calculationview'

With some effort, _SYS_REPO.ACTIVE_OBJECT can be joined to OBJECT_DEPENDENCIES to discover the objects on which the information view depends:

SELECT od.BASE_SCHEMA_NAME , od.BASE_OBJECT_NAME , od.BASE_OBJECT_TYPE FROM _SYS_REPO.ACTIVE_OBJECT ao INNER JOIN OBJECT_DEPENDENCIES od ON '_SYS_BIC' = od.DEPENDENT_SCHEMA_NAME AND ao.PACKAGE_ID||'/'||ao.OBJECT_NAME = od.DEPENDENT_OBJECT_NAME AND 'VIEW' = od.DEPENDENT_OBJECT_TYPE WHERE ao.PACKAGE_ID = 'my.package.name' AND ao.OBJECT_NAME = 'CA_MY_CALCULATION_VIEW' AND ao.OBJECT_SUFFIX = 'calculationview'

(Note: OBJECT_DEPENDENCIES reports all dependencies, not just direct dependencies)

Or we can query the other way around, and find the corresponding model for a dependency we found in OBJECT_DEPENDENCIES:

SELECT ao.PACKAGE_ID

, ao.OBJECT_NAME

, ao.OBJECT_SUFFIX

, ao.CDATA

FROM object_dependencies od

INNER JOIN _SYS_REPO.ACTIVE_OBJECT ao

ON SUBSTR_BEFORE(od.base_object_name, '/') = ao.package_id

AND SUBSTR_AFTER(od.base_object_name, '/') = ao.object_name

AND ao.object_suffix in (

'analyticview'

, 'attributeview'

, 'calculationview'

)

WHERE od.DEPENDENT_SCHEMA_NAME = '_SYS_BIC'

AND od.DEPENDENT_OBJECT_NAME = 'my.package.name/CA_MY_CALCULATION_VIEW'

AND od.DEPENDENT_OBJECT_TYPE = 'VIEW'

NOTE: It turns out that querying OBJECT_DEPENDENCIES fails at reporting dependencies between analytic views and the attribute views they use. To capture those dependencies, you need to query _SYS_REPO.ACTIVE_OBJECTCROSSREF.

Parsing the information view’s XML definition with stored procedure p_parse_xml

Once we obtained the XML that defines the information view, we still need to pull it apart so we can figure out how it is tied to our base table columns. To do that, we first apply a general XML parser that turns the XML text into a (temporary) table or table variable, such that each row represents a distinct, atomic element inside the XML document. For this purpose I developed a HANA stored procedure called p_parse_xml. Here is its signature:

create PROCEDURE p_parse_xml (

-- XML string to parse

p_xml nclob

-- Parse tree is returned as a table variable

, out p_dom table (

-- unique id of the node

node_id int

-- id of the parent node

, parent_node_id int

-- dom node type constant: 1=element, 2=attribute, 3=text, 4=cdata,

-- 5=entityref, 6=entity, 7=processing instruction,

-- 8=comment, 9=document, 10=document type,

-- 11=document fragment, 12=notation

, node_type tinyint

-- dom node name: tagname for element, attribute name for attribute,

-- target for processing instruction,

-- document type name for document type,

-- "#text" for text and cdata, "#comment" for comment,

-- "#document" for document, "#document-fragment" for document fragment.

, node_name nvarchar(64)

-- dom node value: text for text, comment, and cdata nodes, data for processing instruction node, null otherwise.

, node_value nclob

-- raw token from the parser

, token_text nclob

-- character position of token

, pos int

-- lenght of token.

, len int

)

-- flag whether to strip text nodes that only contain whitespace from the parse tree

, p_strip_empty_text tinyint default 1

)

Note that you can download the source dode for the entire procedure from github. The p_parse_xml procedure depends on p_decode_xml_entities, so if you want to run it yourself, be sure to install that first.

To see how you can use this, consider the following, simple example:

call p_parse_xml(

'<parent-element attribute1="value1">

<child-element attribute2="value2" attribute3="value3">

text-content1

</child-element>

<child-element att="bla">

text-content2

</child-element>

</parent-element>', ?);

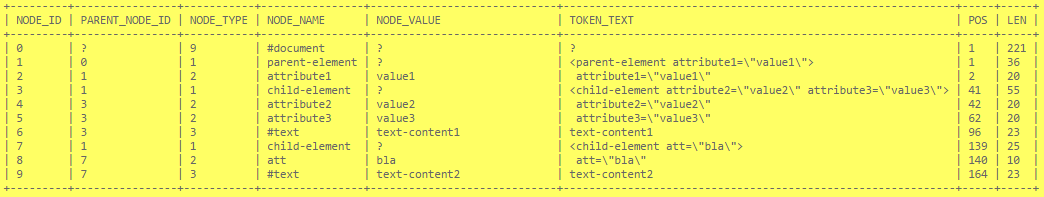

This gives us the following result:

The result is a tabular representation of the XML parse tree. Each row essentially represents a DOM Node, and the column values represent the node’s properties:

- The

NODE_TYPEcolumn tells us what kind of node we’re dealing with. Values in this column conform to the w3c standard document object model (DOM) enumeration of node type values. The most important ones are 1 for element nodes (“tags”); 2 for attributes, and 3 for text. The entire parse tree is contained in a document node, which has node type 9. - The

NODE_IDis the unique identifier of the node whilePARENT_NODE_IDpoints to whatever node is considered the parent node of the current node. The parent node is basically the container of the node. As you can see, the element withNODE_ID=3has the element node withNODE_ID=1as parent. These correspond to the first<child-element>and<parent-element>elements in the document. Attribute nodes are also marked as children of the element to which they belong. The DOM standard does not consider attributes children of their respective element node, butp_parse_xmldoes, mainly to keep the result table as simple as possible. - The

NODE_NAMEcolumn is a further characterization of what kind of node we’re realing with. For most node types, the node name is a constant value which is essentially a friendly name for the node type.For example, document nodes (NODE_TYPE=9always have#documentasNODE_NAME, and text nodes (NODE_TYPE=3) always have#textasNODE_NAME. For element nodes and attribute nodes (NODE_TYPEis1and2respectively), theNODE_NAMEis not constant. Rather, their node name conveys information about the meaning of the node and its contents. In other words, element and attribute names are metadata. - The

NODE_VALUEcolumn contains actual data. For element and document nodes, it is alway NULL. For attributes, theNODE_VALUEcolumn contains the attribute value, and for text nodes, it is the text content. - The

POSlists the position where the current element was found; theLENcolumn keeps track of the length of current item as it appears in the doucment. Typically you won’t need these columns, except maybe for debugging purposes. TheTOKEN_TEXTcolumn is also here mostly for debugging purposes.

Extracting Base Columns from Analytic and Attribute views

If you examine the XML definition of Analytic and/or Attribute views, you’ll notice that table base columns are referenced by <keyMapping> and <measureMapping>-elements like this:

<keyMapping schemaName="...database schema..." columnObjectName="...table name..." columnName="...column name..."/>

So, assuming we already parsed the model of an analytic or attribute view using p_parse_xml and captured its result in a table variable called tab_dom, we can run a query like this to obtain all <keyMapping> and <measureMapping>-elements:

select mapping.*

from :tab_dom mapping

where mapping.node_type = 1 -- get us all elements

and mapping.node_name in ('keyMapping' -- with tagnames 'keyMappping' or 'measureMappping'

,'measureMapping')

While this gives us the actual elements, the actual data we’re interested in is buried in the attributes of the <keyMapping> and <measureMapping>-elements. You might recall that in the p_parse_xml result, attribute nodes have NODE_TYPE=2 appear as childnode of their respective element. So, we can extract all attributes of all <keyMapping> and <measureMapping>-elements with a self-join like this:

select mapping_attributes.*

from :tab_dom mapping

inner join :tab_dom mapping_attributes

on mapping.node_id = mapping_attributes.parent_node_id -- find all nodes that have the keymapping element node as parent

and 2 = mapping_attributes.node_type -- but only if their node type indicates they are attribute nodes

where mapping.node_type = 1

and mapping.node_name in ('keyMapping', 'measureMapping')

Since we are interested in not just any attribute node, but attribute nodes having specific names like schemaName, columnObjectName and columnName, we should put a further restriction on the NODE_NAME of these attribute nodes. Also note that this query will potentially give us multiple rows per <keyMapping> or <measureMapping>-element (in fact, just as many as there are attributes). Since we’d like to have just one row for each <keyMapping> or <measureMapping>-element having the values of its schemaName, columnObjectName and columnName attributes in separate columns, we should rewrite this query so that each attribute gets its own self-join.

Thus, the final query becomes:

select mapping_schemaName.node_value as schema_name

, mapping_columnObjectName.node_value as table_name

, mapping_columnName.node_value as column_name

from :tab_dom mapping

inner join :tab_dom mapping_schemaName -- get the attribute called 'schemaName'

on mapping.node_id = mapping_schemaName.parent_node_id

and 2 = mapping_schemaName.node_type

and 'schemaName' = mapping_schemaName.node_name

inner join :tab_dom mapping_columnObjectName -- get the attribute called 'columnObjectName'

on mapping.node_id = mapping_columnObjectName.parent_node_id

and 2 = mapping_columnObjectName.node_type

and 'columnObjectName' = mapping_columnObjectName.node_name

inner join :tab_dom mapping_columnName -- get the attribute called 'columnName'

on mapping.node_id = mapping_columnName.parent_node_id

and 2 = mapping_columnName.node_type

and 'columnName' = mapping_columnName.node_name

where mapping.node_type = 1

and mapping.node_name in ('keyMapping', 'measureMapping')

Extracting base columns from Calculation views

Getting the base columns used in calculation views is a bit more work. However, the good news is that in terms of the queries we need to write, it does not get much more complicated than what we witnessed for analytic and attribute views in te previous section. Querying the xml parse tree almost always boils down to finding elements and finding their attributes, and then doing something with their values.

The reason why it is more work to write queries against the model underlying calculation views is that the XML documents that define calculationviews use an extra level of mapping between the objects that represent the source of the columns and the way these columns are used inside the view. The following snippet might illustrate this:

<Calculation:scenario ...>

...

<dataSources>

...

<DataSource id="...some id used to refer to this datasource..." type="DATA_BASE_TABLE">

...

<columnObject schemaName="...db schema name..." columnObjectName="...table name..."/>

...

</DataSource>

...

</dataSources>

...

<calculationViews>

...

<calculationView>

...

<input node="#...id of a DataSource element...">

...

<mapping source="...name of a column used as input..." ... >

...

</input>

...

</calculationView>

...

</calculationViews>

...

</Calculation:scenario>

The method for finding the base columns can be summarized as follows:

- Get all

<DataSource>-elements having atype-attribute with the value"DATA_BASE_TABLE". These elements represent all base tables used by this view. Other types of objects used by this view will have another value for thetype-attribute.To obtain the schema and table name of the base table, find the<columnObject>-childelement of the<DataSource>-element. ItsschemaNameandcolumnObjectName-attributes respectively contain the database schema and table name of the base table.The

<DataSource>-elements have anidattribute, and its value is used as unique identifier to refer to this data source. - Find all instances where the base table datasources are used.A calculation view is essentially a graph of data transformation steps, each of which takes one or more streams of data as input, turning it into a stream of output data. In the XML document that defines the calculation view, these transformation steps are represented by

<calulationView>-elements. These<calulationView>-elements contain one or more<input>-child elements, each of which represents a data stream that is used as input for the transformation step.The

<input>-elements have anode-attribute. The value of thenode-attribute is the value of theid-attribute of whatever element it refers to, prefixed by a hash-sign (#).Note that this is a general technique to reference elements within the same XML document. So, in order to find where a

<DataSource>-element is used, it is enough to find all elements in the same XML document that reference the value<DataSource>-element’sid-attribute in the value of theirnode-attribute. - Once we have the elements that refer to our

<DataSource>-element, we can find out which columns from the data source are used by looking for<mapping>-child elements.The<mapping>-elements have asource-attribute, which holds the column-name.

With these steps in mind, the SQL query we need to do on the calculation view parse tree becomes:

select distinct

ds_co_schemaName.node_value schema_name

, ds_co_columnObjectName.node_value table_name

, ds_usage_mapping_source.node_value column_name

--

-- ds: DataSource elements (Note the WHERE clause)

--

from :tab_dom ds

--

-- ds_type: demand that the value of the type-attribute of the DataSource elements equal 'DATA_BASE_TABLE'

-- this ensures we're only looking at base tables.

--

inner join :tab_dom ds_type

on ds.node_id = ds_type.parent_node_id

and 2 = ds_type.node_type

and 'type' = ds_type.node_name

and 'DATA_BASE_TABLE' = cast(ds_type.node_value as varchar(128))

--

-- ds_co: get the columnObject childelement of the DataSource element.

-- Also, get the schemaName and columnObjectName attributes of that columnObject-element.

--

inner join :tab_dom ds_co

on ds.node_id = ds_co.parent_node_id

and 1 = ds_co.node_type

and 'columnObject' = ds_co.node_name

inner join :tab_dom ds_co_schemaName

on ds_co.node_id = ds_co_schemaName.parent_node_id

and 2 = ds_co_schemaName.node_type

and 'schemaName' = ds_co_schemaName.node_name

inner join :tab_dom ds_co_columnObjectName

on ds_co.node_id = ds_co_columnObjectName.parent_node_id

and 2 = ds_co_columnObjectName.node_type

and 'columnObjectName' = ds_co_columnObjectName.node_name

--

-- ds_id: get the id-attribute of the DataSource element.

--

inner join :tab_dom ds_id

on ds.node_id = ds_id.parent_node_id

and 2 = ds_id.node_type

and 'id' = ds_id.node_name

--

-- ds_usage: find any attributes that refer to the id of the DataSource

--

inner join :tab_dom ds_usage

on 'node' = ds_usage.node_name

and 2 = ds_usage.node_type

and '#'||ds_id.node_value = cast(ds_usage.node_value as nvarchar(128))

--

-- ds_mapping: find any mapping child elements of the node that references the DataSource

--

inner join :tab_dom ds_usage_mapping

on 'mapping' = ds_usage_mapping.node_name

and 1 = ds_usage_mapping.node_type

and ds_usage.node_id = ds_usage_mapping.parent_node_id

--

-- ds_mapping_source: get the source of the mapping elements. These are our base column names.

--

inner join :tab_dom ds_usage_mapping_source

on 'source' = ds_usage_mapping_source.node_name

and 2 = ds_usage_mapping_source.node_type

and ds_usage_mapping.node_id = ds_usage_mapping_source.parent_node_id

where ds.node_type = 1

and ds.node_name = 'DataSource'

Putting it all together

To recapitulate, we discussed

- How to do general queries for dependencies using

OBJECT_DEPENDENCIES, but that you need to query_SYS_REPO.ACTIVE_OBJECTCROSSREFto find out which Attribute views are used by Analytic views. - How to find the model XML code underlying our information views from the

_SYS_REPO.ACTIVE_OBJECTtable. - How to parse XML, and how to query the parse tree for elements and attributes

- How the XML documents for information views are structured, and how to find base columns used in their models

With all these bits and pieces of information, we can finally create a procedure that fullfills the original requirement to obtain the base columns used by our information views. This is available as the p_get_view_basecols stored procedure. Here is its signature:

create PROCEDURE p_get_view_basecols (

-- package name pattern. Used to match packages containing analytic, attribute or calculation views. Can contain LIKE wildcards.

p_package_id nvarchar(255)

-- object name pattern. Used to match name of analytic, attribute or calculation views. Can contain LIKE wildcards.

, p_object_name nvarchar(255) default '%'

-- object suffix pattern. Can be used to specify the type of view. Can contain LIKE wildcards.

, p_object_suffix nvarchar(255) default '%'

-- flag to indicate whether to recursively analyze analytic, attribute or calculation views on which the view to be analyzed depends.

-- 0 means only look at the given view, 1 means also look at underlying views.

, p_recursive tinyint default 1

-- result table: base columns on which the specified view(s) depends.

, out p_cols table (

-- schema name of the referenced base column

schema_name nvarchar(128)

-- table name of the referenced base column

, table_name nvarchar(128)

-- column name of the referenced base column

, column_name nvarchar(128)

-- list of view names that depend on the base column

, views nclob

)

)

Obtaining the list of base columns on which our application depends is now as simple as calling the procedure, like so:

call p_get_view_basecols( -- look in our application package (and its subpackages) 'our.application.package.%' -- consider all information views , '%' -- consider all types of information views , '%' -- consider also information views upon which our information views depend , 1 -- put the results into our output table , ? );

Finally

I hope you enjoyed this post! Feel free to leave a comment to share your insights or to give feedback.

Please note that all source code for this topic is freely available as open source software in our just-bi/hades github reposiory. You are free to use, modify and distribute it, as long as you respect the copyright notice.

We welcome contributions! You can contribute in many ways:

- Simply use the procedures. Give us feedback. You can do so by leaving a comment on this blog.

- Spread the word: tell your colleagues, and maybe tweet or write blog post about it. Please use hashtag #justbihades

- Share your requirements. Create an issue to ask for more features so we can improve our software.

- Fork it!. Send us pull requests. We welcome your contribution and we will fully attribute you!